May 5, 2026

AI is transforming data compression in particle physics, addressing the massive data challenges faced by experiments like CERN's LHC, which generates 30 petabytes annually, and sPHENIX, handling 1 terabyte per second. Traditional methods often fall short due to the complex and sparse nature of this data. AI-based solutions, such as Variational Autoencoders (VAEs), neural networks, and Transformer models like NanoGPT, are stepping in to reduce data volumes while preserving critical details.

Key takeaways:

Each method has specific strengths and limitations, making the choice dependent on experimental needs like real-time processing, storage constraints, or data fidelity.

Variational Autoencoders (VAEs) excel at compressing data by converting high-dimensional detector data into a smaller latent representation, which serves as a compact code. From this compressed form, the decoder reconstructs the original signal. This approach is particularly effective for handling discontinuous and skewed ADC distributions.

At Brookhaven National Laboratory, researchers developed the Bicephalous Convolutional Auto-Encoder (BCAE) for the sPHENIX Time Projection Chamber at the Relativistic Heavy Ion Collider. The BCAE-VS model employs two decoders - one for segmentation and another for regression. This setup delivers a 75% boost in reconstruction accuracy and a 10% higher compression ratio, all within a model that is more than two orders of magnitude smaller than earlier versions.

The real game-changer here is the use of sparse convolution techniques. Unlike traditional dense convolutions, which process every voxel regardless of its content, sparse convolutions skip over all-zero operands, avoiding unnecessary matrix multiplications. This is especially important for data with very low occupancy levels. As Yi Huang and colleagues note:

"Data compression can be achieved by selectively down-sampling signals rather than resizing the input array".

By focusing on key signals and selectively down-sampling, this method retains critical trajectory information, paving the way for tackling large-scale data challenges with greater efficiency.

One of the biggest hurdles is scalability - processing 3.2 trillion voxels per second without overwhelming storage systems. Traditional autoencoders fall short because they rely on fixed-size latent representations. A code designed for dense collision events wastes space on sparse data, while one optimized for sparse events risks losing vital details during high-occupancy collisions.

The BCAE-VS model addresses this with variable-rate compression, dynamically adjusting its compression ratio based on data occupancy. This ensures optimal storage for both simple and complex collision events. This approach is critical when storage is limited to roughly 1 petabyte per day, while data generation rates range from 1 terabyte to several petabytes per second. The High Luminosity LHC upgrade, coming in 2029, will increase particle collision rates by a factor of ten, demanding storage resources 3 to 5 times larger than current projections.

These advanced models are already making an impact in real-world experiments. In November 2024, Brookhaven National Laboratory released the BCAE-VS model on GitHub and Zenodo. This model compresses data from the sPHENIX Time Projection Chamber, handling the 10.8% occupancy of non-zero values in simulated gold-gold collision data.

Meanwhile, researchers from Karlsruhe Institute of Technology and Imperial College London applied a Quantum Autoencoder (QAE) to 2016 CMS detector data. Using a "1 Particle - 1 Qubit" encoding scheme, they successfully distinguished top quark jets from background QCD jets with just 3N+1 trainable parameters for N particles.

In 2023, teams at Lund University and the University of Manchester introduced "Baler", an open-source tool for compressing multi-dimensional ROOT data from CMS and ATLAS experiments. This tool is specifically designed to address the resource challenges of the High Luminosity LHC era. These architectures are also being used in trigger systems for anomaly detection, flagging events with high reconstruction errors as potential indicators of new physics.

Neural network compression takes a completely different route compared to traditional algorithms like LZMA or ZLIB. Instead of treating data as a string of generic bytes, these methods are designed to identify and learn patterns within the data itself - patterns tied to physical parameters in particle detector readings. As Akshat Gupta and his team from the University of Manchester put it:

"Algorithms commonly employed for HEP data compression, such as LZMA and ZLIB, largely overlook the inherent structure of the data, including correlations between sequential measurements and dependencies among physical parameters".

In November 2025, the University of Manchester team introduced the BOA Constrictor, a Mamba-based lossless compression model. This tool achieved an impressive 44.14x compression ratio on CMS data, far surpassing LZMA-9's 27.14x baseline. What makes it so effective? It uses State Space Models, which are capable of processing lengthy data sequences while maintaining constant memory usage and linear complexity. For ATLAS data, BOA Constrictor boosted compression performance from 1.69x to 2.21x.

While data-specific learning methods are powerful, the real hurdle lies in scalability. The Large Hadron Collider generates 30 petabytes of data annually, making it essential for compression methods to handle such massive volumes efficiently. Traditional neural networks often fall short when it comes to real-time data streams.

This is where knowledge distillation comes into play. In May 2024, researchers at Karlsruhe Institute of Technology developed DistillNet, a method that compresses complex Graph Neural Networks (GNNs) into smaller Deep Neural Networks. These smaller "student" networks reduced trainable parameters by a factor of 27 and cut floating-point operations by 35. Even more impressively, they sped up CPU inference by nearly three orders of magnitude compared to the original "teacher" GNN. This kind of speed is critical for trigger systems, which need to process data within milliseconds. Such scalable solutions are indispensable in high-data-rate environments where experiments must make split-second decisions.

Neural network compression methods are becoming a cornerstone of modern particle physics experiments, ensuring that data compression aligns with real-time processing needs. The BOA Constrictor has been successfully tested on both ATLAS and CMS datasets, showcasing its ability to handle the diverse data structures of different detectors. On the other hand, DistillNet's knowledge distillation strategy has proven invaluable for the High Luminosity LHC upgrade. This upgrade demands that trigger systems make near-instantaneous decisions about which collision events to preserve, and DistillNet's efficiency is helping meet that challenge.

NanoGPT takes a different approach to data compression by using an autoregressive method that estimates byte-level dependencies, offering a fresh perspective compared to traditional neural network compression techniques.

NanoGPT compresses data by predicting byte-level probabilities in an autoregressive manner. Essentially, the model calculates the likelihood of each byte based on the data that came before it. This allows an entropy coder, like a range coder, to compress the data stream much closer to its theoretical limits. Unlike conventional methods, which often overlook byte-level relationships, NanoGPT dives deep into these dependencies. The result? Compression ratios that traditional techniques simply can't achieve. However, while this precision boosts efficiency, the challenge lies in scaling these methods to handle colossal datasets, such as those in high-energy physics.

NanoGPT uses a Transformer architecture to uncover correlations in particle physics datasets - where collision events can involve thousands of objects and generate up to 1 MB of data per event. However, the quadratic complexity of its computations (O(L²)) poses a significant hurdle when processing extremely long sequences. With experiments producing ever-growing volumes of data, real-time processing becomes a necessity. Researchers are now focused on overcoming these scalability limitations, which is essential for translating NanoGPT's potential from theory to real-world application.

By combining neural prediction with entropy coding, NanoGPT can transform predicted probabilities into highly compressed bytestreams. This approach not only conserves storage space but also retains the intricate patterns critical for scientific analysis. Its ability to adapt to the unique structures in datasets makes it especially valuable for particle physics, where even the smallest correlations can hold key insights.

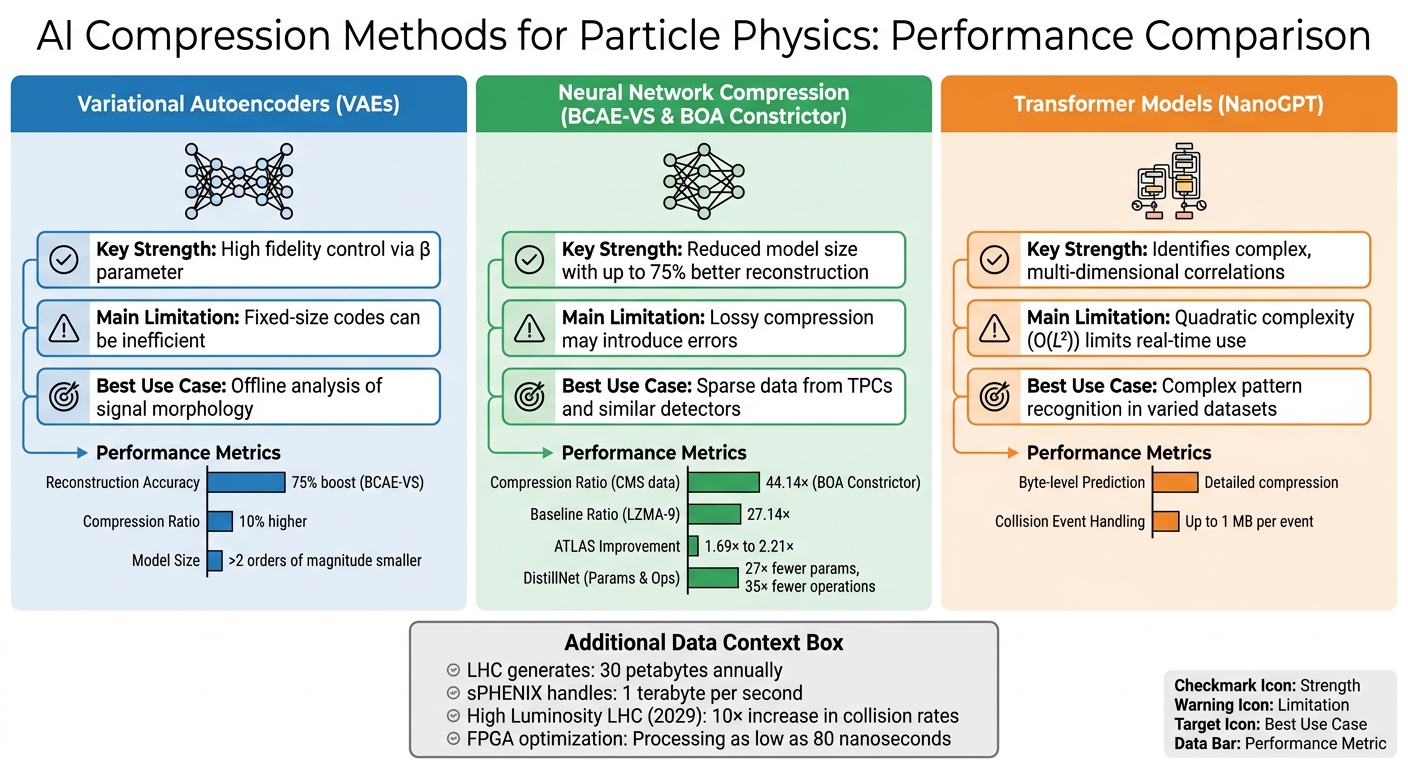

AI Data Compression Methods in Particle Physics: Performance Comparison

Let’s break down the key strengths and challenges of each AI compression method in the context of particle physics experiments.

Each approach has its own set of trade-offs. Variational Autoencoders (VAEs) stand out for their ability to control fidelity using the β parameter. This makes them a dependable choice for offline analyses where preserving the shape of signals is critical. However, VAEs often rely on fixed-size codes, which can be inefficient when dealing with sparse datasets. This design might also lead to information loss in denser datasets.

Neural Network Compression methods, like BCAE-VS, shine when processing sparse data, such as that found in the sPHENIX Time Projection Chamber. Yi Huang, a computational scientist at Brookhaven, highlights this advantage:

"The fewer voxels with meaningful values, the less computation our algorithm needs to do, resulting in faster data processing".

That said, their lossy compression nature can compromise data precision.

Transformer-based models, such as NanoGPT, are exceptional at identifying intricate correlations within collision events. They adapt well to new and unseen data patterns, but this adaptability comes with a steep cost: their quadratic computational complexity makes them less suitable for real-time processing.

Here’s a quick comparison of these methods:

| Method | Key Strength | Main Limitation | Best Use Case |

|---|---|---|---|

| VAEs | High fidelity control via β parameter | Fixed-size codes can be inefficient | Offline analysis of signal morphology |

| BCAE-VS | Reduced model size with up to 75% better reconstruction | Lossy compression may introduce errors | Sparse data from TPCs and similar detectors |

| NanoGPT | Identifies complex, multi-dimensional correlations | Quadratic complexity limits real-time use | Complex pattern recognition in varied datasets |

Advancements in hardware further help address some of these challenges. For instance, researchers at MIT and CERN optimized autoencoders to run on FPGAs for the LHC's third operational run. This allowed them to achieve processing times as low as 80 nanoseconds. While training these models often demands considerable computational resources, careful optimization makes them incredibly fast and efficient during inference - perfect for high-throughput environments.

Selecting the right AI compression method for particle physics hinges on the specific needs of your experiment. BOA Constrictor stands out with a 44.14× compression ratio on CMS datasets, surpassing LZMA-9's 27.14× ratio. This makes it an excellent choice for archival storage where maintaining data integrity is paramount, though its throughput is slower compared to simpler algorithms like ZLIB.

BCAE-VS shines in handling sparse data, offering high reconstruction accuracy while significantly reducing model size. It’s particularly useful for detectors like Time Projection Chambers, where data occupancy can be as low as 10⁻³. However, its lossy nature means some precision is traded for faster processing and storage efficiency.

Quantum autoencoders, such as the 1P1Q model, showcase exciting potential. With just 31 trainable parameters, it achieved an AUC of 0.872 for top quark jet discrimination, outperforming classical autoencoders that require around 2,500 parameters. Yet, practical application remains limited by current quantum hardware capabilities.

Each method has its strengths, tailored to different experimental priorities. BOA Constrictor is ideal for long-term storage, BCAE-VS excels in real-time sparse data processing, and quantum solutions hint at future applications in resource-limited scenarios. As Akshat Gupta and Caterina Doglioni from the University of Manchester explain:

"Minimising this cross-entropy loss therefore directly optimises our model for compression, as reducing DKL(P||Q) simultaneously improves both predictive accuracy and compression efficiency".

Ultimately, your choice should align with what matters most to your experiment - whether that’s maximizing storage, ensuring real-time processing, or achieving precise data reconstruction.

Lossless compression is perfect when preserving every bit of data is critical, ensuring no information is lost. On the other hand, lossy compression is better suited for situations where some loss of detail is acceptable in exchange for higher compression ratios. This trade-off can allow for greater data storage and better statistical efficiency. The choice depends on how much data loss the experiment can tolerate and what its goals are.

Variable-rate VAEs adjust the compression ratio for each event by tweaking the hyperparameter β. This parameter governs the balance between how much data is compressed and how accurately it can be reconstructed, allowing the model to find a middle ground between maintaining data quality and saving storage space.

Yes, it can. Transformer compression has proven capable of reaching the speeds necessary for real-time triggers. For example, FPGA implementations have achieved latencies of less than 2 microseconds. This performance aligns perfectly with the hardware trigger requirements at the Large Hadron Collider (LHC).